TDP v4.2.1 Release Notes

Release date: 5 February 2025

TetraScience has released its next version of the Tetra Data Platform (TDP), version 4.2.1. This release introduces functional data harmonization and engineering enhancements, including a new attribute download option and improved metadata functionality. It also includes enhanced scaling for the TDP’s authorization service, new System Log events for platform upgrades, and several helpful UI improvements.

Here are the details for what’s new in TDP v4.2.1.

SecurityTetraScience continually monitors and tests the TDP codebase to identify potential security issues. Various security updates are applied to the following areas on an ongoing basis:

- Operating systems

- Third-party libraries

Quality ManagementTetraScience is committed to creating quality software. Software is developed and tested following the ISO 9001-certified TetraScience Quality Management System. This system ensures the quality and reliability of TetraScience software while maintaining data integrity and confidentiality.

New Functionality

New functionalities are features that weren’t previously available in the TDP.

- There is no new functionality in this release

GxP Impact AssessmentAll new TDP functionalities go through a GxP impact assessment to determine validation needs for GxP installations. New Functionality items marked with an asterisk (*****) address usability, supportability, or infrastructure issues, and do not affect Intended Use for validation purposes, per this assessment. Enhancements and Bug Fixes do not generally affect Intended Use for validation purposes, and items marked as either beta release or early adopter program (EAP) are not suitable for GxP use.

Enhancements

Enhancements are modifications to existing functionality that improve performance or usability, but don't alter the function or intended use of the system.

Performance and Scale Enhancements

Enhanced Scaling for Authorization*

To help prevent login issues as more users access the TDP, auto scaling performance for the platform’s authorization service has been improved.

Data Harmonization and Engineering Enhancements

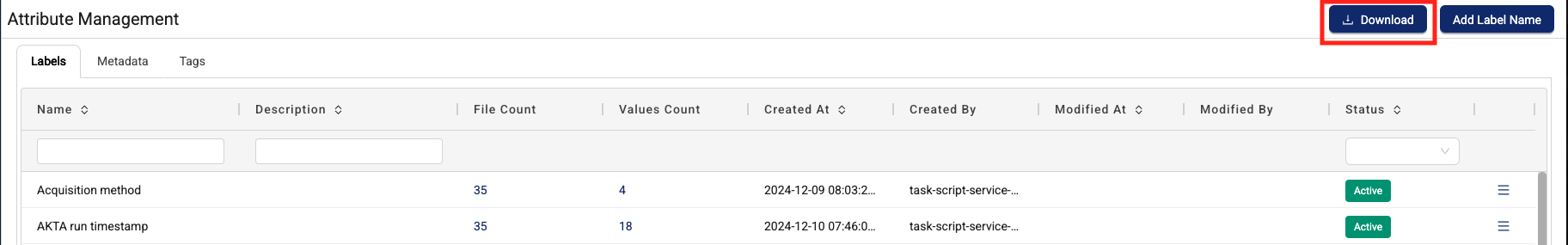

New Download Option for Attributes*

Customers can now download their organization’s attributes as a CSV file by using the new Download button on the Attribute Management page.

For more information, see Manage and Apply Attributes.

Attribute Management page Download button

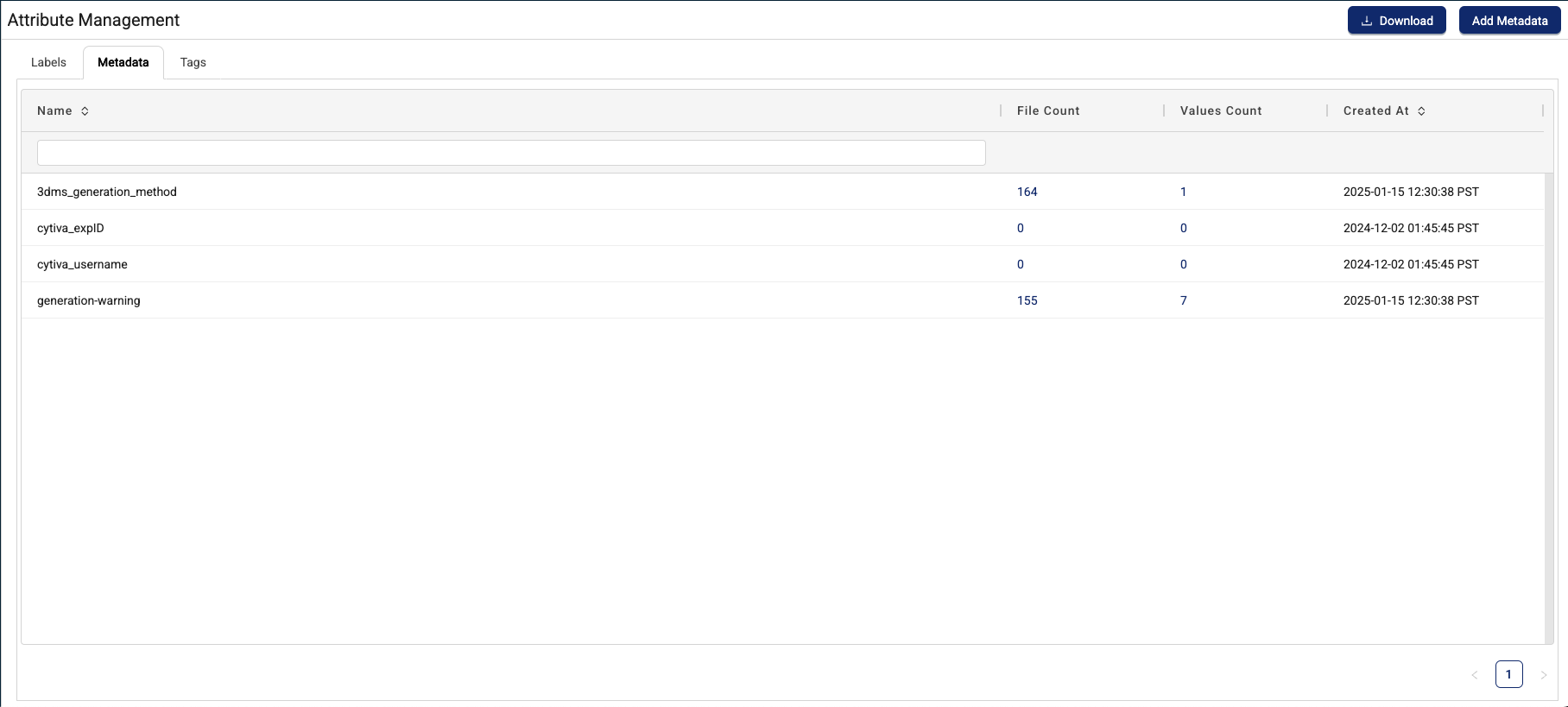

Improved Metadata Functionality*

Metadata functionality has been improved to further meet FAIR data principles.

The following enhancements have been made to the Metadata tab on the Attribute Management page:

- It’s now simpler to view all files with a certain metadata name, and then review all available metadata values.

- Customers can now filter by metadata names or values, and then explore data with those attributes on the Search page in one click.

For more information, see Review and Manage Metadata.

Metadata tab

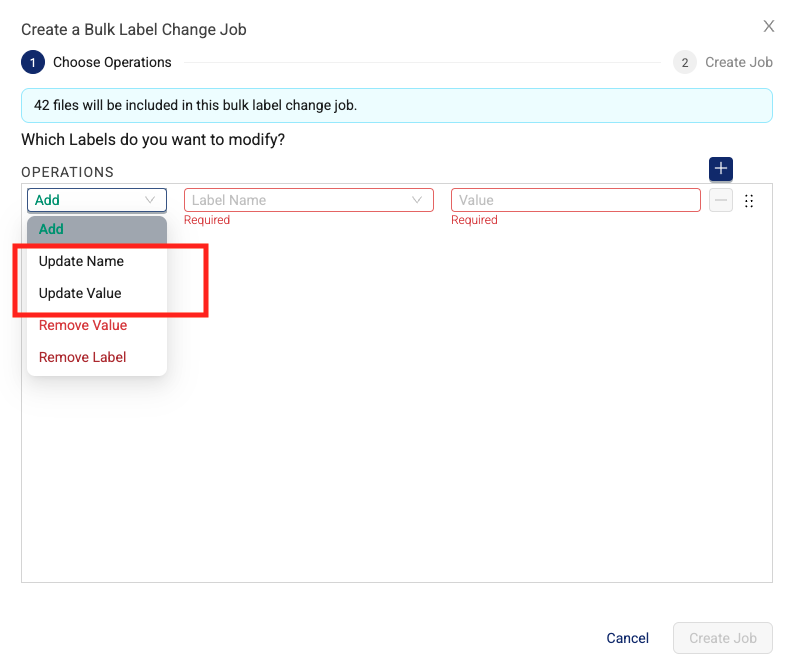

Clearer Update Operations for Bulk Label Change Jobs*

To make it clearer what update operations in the Create a Bulk Label Change Job dialog do, the previous, single Update operation is now two different operations:

- Update Name: Modifies a label’s name for each file

- Update Value: Modifies a label’s value for each file

The previous Update operation provided the option to update both a label’s name and/or values. No changes have been made to the other Bulk Label Change Job operations (Add, Remove Value, and Remove Label).

For more information, see Edit Labels in Bulk.

Create a Bulk Label Change Job dialog

References to Retry Behavior Settings Are Now Consistent*

References to specific retry behavior settings are now consistent across the Pipeline Manager and Pipeline Edit pages.

For more information, see Retry Behavior Settings.

TDP System Administration Enhancements

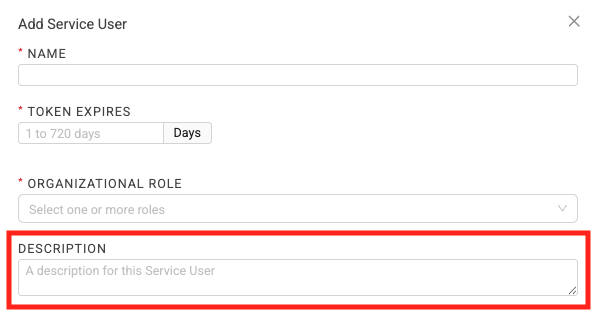

Updated on: 5 June 2025 (addednew description field for service users)

New Description Field for Service Users

Added on: 5 June 2025

System administrators now have the option to enter a Description when creating or editing Service Users. This field can provide an indication of what the Service User is for.

For more information, see Add a Service User and Edit a Service User.

New TDP Upgrade Events*

To help track TDP environment upgrades, the System Log now includes the following platform events:

- Upgrade started: Shows when a TDP environment upgrade starts.

- Upgrade successful: Shows when a TDP environment upgrade completes successfully.

- Upgrade failed: Shows when a TDP environment upgrade fails to complete.

For more information, see System Log.

New Tag for All TDP AWS Resources*

All TDP AWS infrastructure resources now include a new tetrascience_product tag. The new tag helps improve platform security and streamline infrastructure management, but has no effect on the performance or usability of the TDP.

Infrastructure Updates

There are no infrastructure changes in this release.

Bug Fixes

The following bugs are now fixed.

Data Harmonization and Engineering Bug Fixes

- On the Pipeline Manager page, in the Pipeline Info section, the MAX RETRIES and BASE RETRY DELAY (IN SECONDS) fields no longer appear when No retry is selected for a pipeline’s RETRY BEHAVIOR.

- Customers can now use labels with dots (

.) in searches and in pipeline triggers.

Data Access and Management Bug Fixes

- IDS SQL tables with only

parent_uuidanduuidcolumns are now consistently generated during ingestion into the Data Lakehouse. The following IDS SQL tables were previously affected:liquid_handler_to_freezer_cell_inventory_v2_runsmass_spectrometer_mzml_v2_methodsmass_spectrometer_mzml_v2_resultshardness_tester_pharmatron_8m_v2_results

Deprecated Features

There are no new deprecated features in this release.

For more information about TDP deprecations, see Tetra Product Deprecation Notices.

Known and Possible Issues

Last updated: 3 March 2026

The following are known and possible issues for TDP v4.2.1.

Data Harmonization and Engineering Known Issues

- On the Pipeline Edit page, in the Retry Behavior field, the Always retry 3 times (default) value sometimes appears as a null value.

- File statuses on the File Processing page can sometimes display differently than the statuses shown for the same files on the Pipelines page in the Bulk Processing Job Details dialog. For example, a file with an

Awaiting Processingstatus in the Bulk Processing Job Details dialog can also show aProcessingstatus on the File Processing page. This discrepancy occurs because each file can have different statuses for different backend services, which can then be surfaced in the TDP at different levels of granularity. A fix for this issue is in development and testing. - Logs don’t appear for pipeline workflows that are configured with retry settings until the workflows complete.

- Files with more than 20 associated documents (high-lineage files) do not have their lineage indexed by default. To identify and re-lineage-index any high-lineage files, customers must contact their CSM to run a separate reconciliation job that overrides the default lineage indexing limit.

- OpenSearch index mapping conflicts can occur when a client or private namespace creates a backwards-incompatible data type change. For example: If

doc.myFieldis a string in the common IDS and an object in the non-common IDS, then it will cause an index mapping conflict, because the common and non-common namespace documents are sharing an index. When these mapping conflicts occur, the files aren’t searchable through the TDP UI or API endpoints. As a workaround, customers can either create distinct, non-overlapping version numbers for their non-common IDSs or update the names of those IDSs. - File reprocessing jobs can sometimes show fewer scanned items than expected when either a health check or out-of-memory (OOM) error occurs, but not indicate any errors in the UI. These errors are still logged in Amazon CloudWatch Logs. A fix for this issue is in development and testing.

- File reprocessing jobs can sometimes incorrectly show that a job finished with failures when the job actually retried those failures and then successfully reprocessed them. A fix for this issue is in development and testing.

- File edit and update operations are not supported on metadata and label names (keys) that include special characters. Metadata, tag, and label values can include special characters, but it’s recommended that customers use the approved special characters only. For more information, see Attributes.

- The File Details page sometimes displays an Unknown status for workflows that are either in a Pending or Running status. Output files that are generated by intermediate files within a task script sometimes show an Unknown status, too.

Data Access and Management Known Issues

Last updated: 3 March 2026

- The Download selected files action replaces the following characters with an underscore (

_) if they appear before the file extension in file names:/[/\\:*?<>|.]+/gu;. As a workaround, customers should compare the names of downloaded files against the source file names in the TDP and then rename them if required. A fix for this issue is in development and testing and is scheduled for TDP v4.4.4. (Added on 3 March 2026) - When customers upload a new file on the Search page by using the Upload File button, the page doesn’t automatically update to include the new file in the search results. As a workaround, customers should refresh the Search page in their web browser after selecting the Upload File button. A fix for this issue is in development and testing and is scheduled for a future TDP release.

- If an IDS file has a field that ends with a backslash

\escape character, the file can’t be uploaded to the Data Lakehouse. These file ingestion failures happen because escape characters currently trigger the Lakehouse data ingestion rules. The errors are recorded on the Files Health Monitoring dashboard in the File Failures section. There is no way to reconcile these file failures at this time. A fix for this issue is in development and testing and is scheduled for TDP v4.2.1. - Values returned as empty strings when running SQL queries on SQL tables can sometimes return

Nullvalues when run on Lakehouse tables. As a workaround, customers taking part in the Data Lakehouse Architecture EAP should update any SQL queries that specifically look for empty strings to instead look for both empty string andNullvalues. - The Tetra FlowJo Data App doesn’t load consistently in all customer environments.

- Query DSL queries run on indices in an OpenSearch cluster can return partial search results if the query puts too much compute load on the system. This behavior occurs because the OpenSearch

search.default_allow_partial_resultsetting is configured astrueby default. To help avoid this issue, customers should use targeted search indexing best practices to reduce query compute loads. A way to improve visibility into when partial search results are returned is currently in development and testing and scheduled for a future TDP release. - Text within the context of a RAW file that contains escape (

\) or other special characters may not always index completely in OpenSearch. A fix for this issue is in development and testing, and is scheduled for an upcoming release. - If a data access rule is configured as [label] exists > OR > [same label] does not exist, then no file with the defined label is accessible to the Access Group. A fix for this issue is in development and testing and scheduled for a future TDP release.

- File events aren’t created for temporary (TMP) files, so they’re not searchable. This behavior can also result in an Unknown state for Workflow and Pipeline views on the File Details page.

- When customers search for labels in the TDP UI’s search bar that include either @ symbols or some unicode character combinations, not all results are always returned.

- The File Details page displays a

404error if a file version doesn't comply with the configured Data Access Rules for the user.

TDP System Administration Known Issues

- The latest Connector versions incorrectly log the following errors in Amazon CloudWatch Logs:

Error loading organization certificates. Initialization will continue, but untrusted SSL connections will fail.Client is not initialized - certificate array will be empty

These organization certificate errors have no impact and shouldn’t be logged as errors. A fix for this issue is currently in development and testing, and is scheduled for an upcoming release. There is no workaround to prevent Connectors from producing these log messages. To filter out these errors when viewing logs, customers can apply the following CloudWatch Logs Insights query filters when querying log groups. (Issue #2818)

CloudWatch Logs Insights Query Example for Filtering Organization Certificate Errors

fields @timestamp, @message, @logStream, @log | filter message != 'Error loading organization certificates. Initialization will continue, but untrusted SSL connections will fail.' | filter message != 'Client is not initialized - certificate array will be empty' | sort @timestamp desc | limit 20 - If a reconciliation job, bulk edit of labels job, or bulk pipeline processing job is canceled, then the job’s ToDo, Failed, and Completed counts can sometimes display incorrectly.

Upgrade Considerations

During the upgrade, there might be a brief downtime when users won't be able to access the TDP user interface and APIs.

After the upgrade, the TetraScience team verifies that the platform infrastructure is working as expected through a combination of manual and automated tests. If any failures are detected, the issues are immediately addressed, or the release can be rolled back. Customers can also verify that TDP search functionality continues to return expected results, and that their workflows continue to run as expected.

For more information about the release schedule, including the GxP release schedule and timelines, see the Product Release Schedule.

For more details about the timing of the upgrade, customers should contact their CSM.

Other Release Notes

To view other TDP release notes, see Tetra Data Platform Release Notes.

Updated 7 days ago