TetraScience AI Services User Guide (v1.2.x)

This guide shows how to use TetraScience AI Services versions 1.2.x.

Prerequisites

TetraScience AI Services requires the following:

- Tetra Data Platform (TDP) v4.4.1 or higher

- A TDP role that includes at least one of the following policy permissions :

Access AI Services

To access TetraScience AI Services, do the following:

- Sign in to the Tetra Data Platform (TDP) as a user with one of the required policy permissions .

- In the left navigation menu, choose Artifacts.

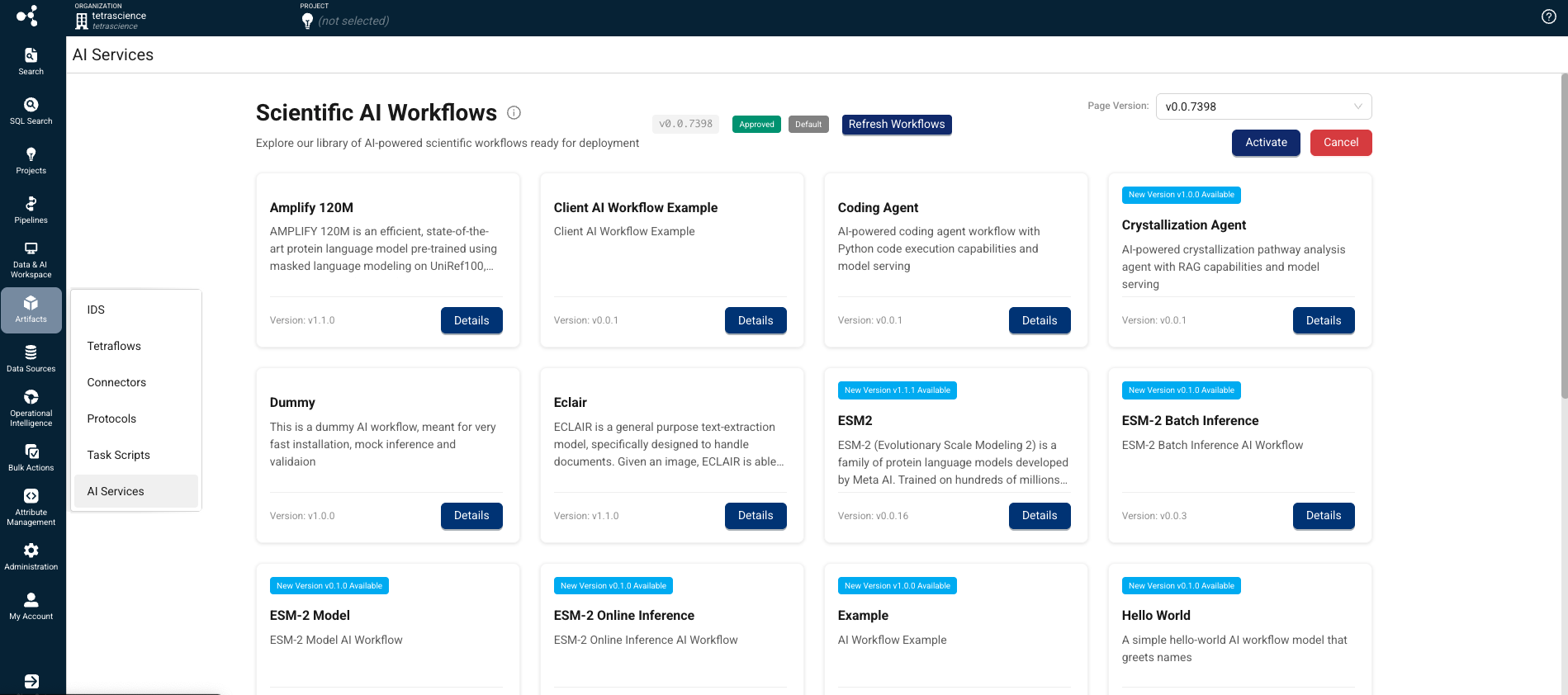

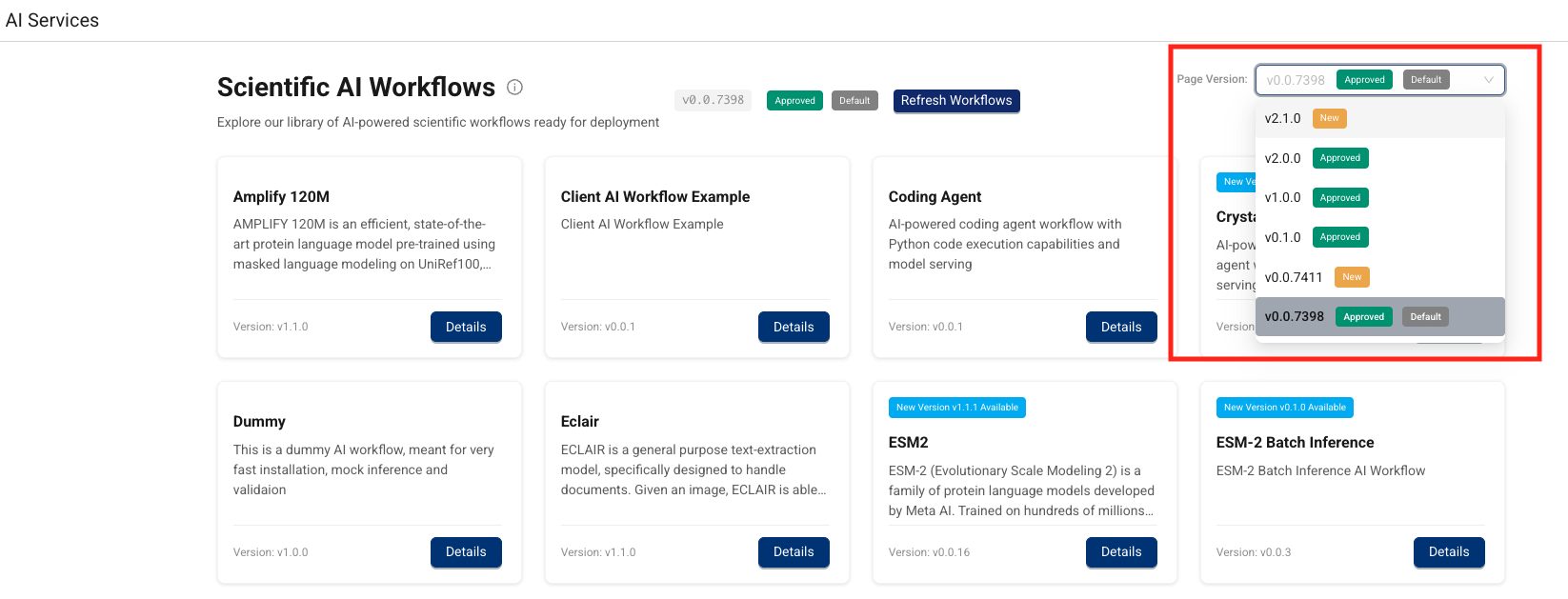

- Select AI Services. The Scientific AI Workflows page appears.

On the Scientific AI Workflows page, you can do any of the following:

- Find a Scientific AI Workflow for your use case: Browse and review workflow versions with associated metadata.

- Activate a Scientific AI Workflow: Admins can activate a specific workflow version to make it available for authorized users.

- Install a Scientific AI Workflow: Provision the required compute resources on-demand, resulting in an inference endpoint you can call programmatically .

- Uninstall a Scientific AI Workflow: Remove the compute resources for a workflow without deleting its artifacts or versions.

- Update the AI Services user interface version: Update the AI Services UI to the latest version.

You can also monitor Scientific AI Workflow jobs through the TDP Health Monitoring Dashboard and System Log.

To run an inference using an installed Scientific AI Workflow, see Run an Inference (AI Services v1.1.x).

Find a Scientific AI Workflow for Your Use Case

To browse Scientific AI Workflows by version and metadata, do the following:

- Open the AI Services page as a user with one of the following policy permissions :

- Tenant Admin

- Organization Admin

- Developer

- Machine Learning Engineer The Scientific AI Workflows page appears and displays the AI Workflows available to your organization.

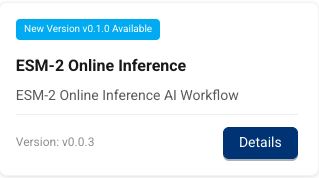

- Find the workflow for your use case. Each workflow's tile displays its name, description, and current version number. Workflows that have new versions available are marked with a New Version vx.x.x Available banner. To get more information about a specific workflow, or to install it, select the workflow's Details button.

NOTE

If you don't see a Scientific AI Workflow for your use case, contact your customer account leader. The ability to create custom AI Workflows isn't currently available, but is planned for a future AI Services release.

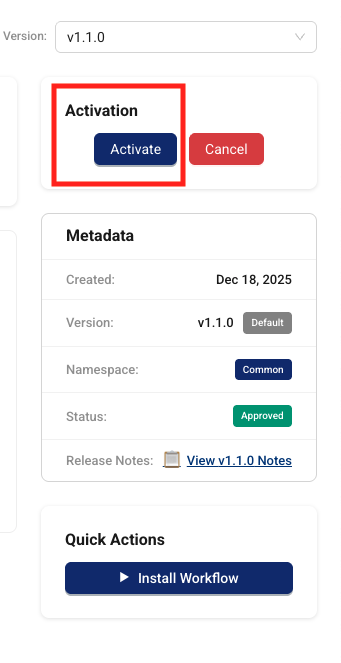

Activate a Scientific AI Workflow

To activate a specific AI workflow version and make it available to authorized users in your organization, do the following:

- Open the AI Services page as a user with one of the following policy permissions :

- Find the workflow that you want to activate.

- Choose the workflow tile's Details button. The AI Workflow's page appears.

- In the Validation tile, choose Activate. The AI workflow is now activated and is available to authorized users in your organization through the AI Services page.

Install a Scientific AI Workflow

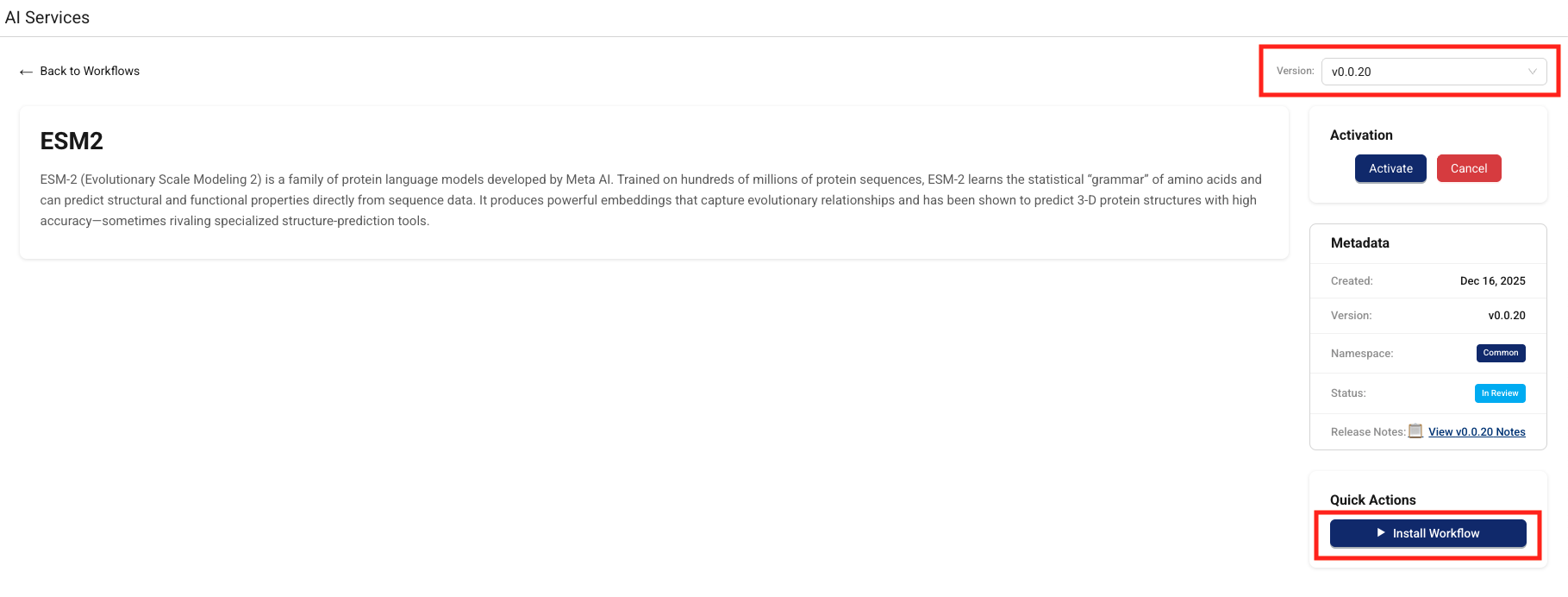

To activate compute resources for specific Scientific AI Workflows on-demand and get an inference URL that you can call to run an inference, do the following:

- Open the AI Services page as a user with one of the following policy permissions :

- Find the workflow that you want to install and receive a new inference URL for.

- Choose the workflow tile's Details button. The AI Workflow's page appears.

- In the Version dropdown, select the workflow version that you want to install.

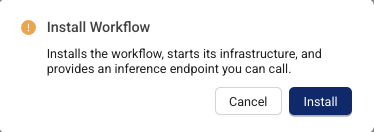

- Choose Install Workflow. The Install Workflow dialog appears and prompts you to confirm that you want to install the workflow. Choose Install.

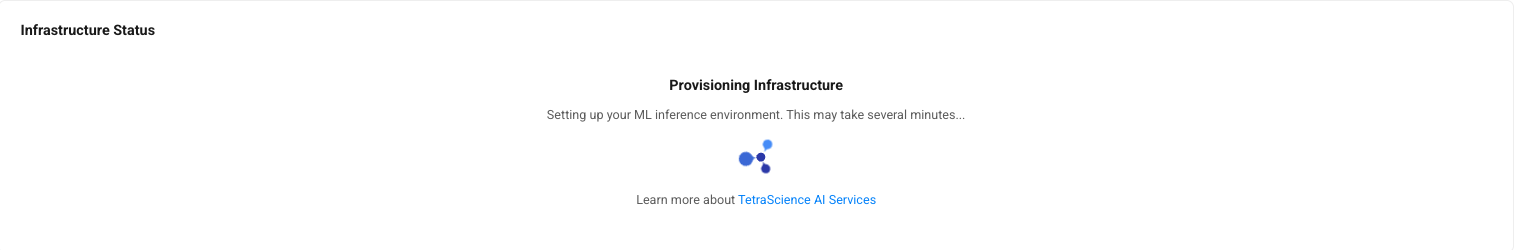

The infrastructure status appears. Setting up your inference environment may take several minutes.

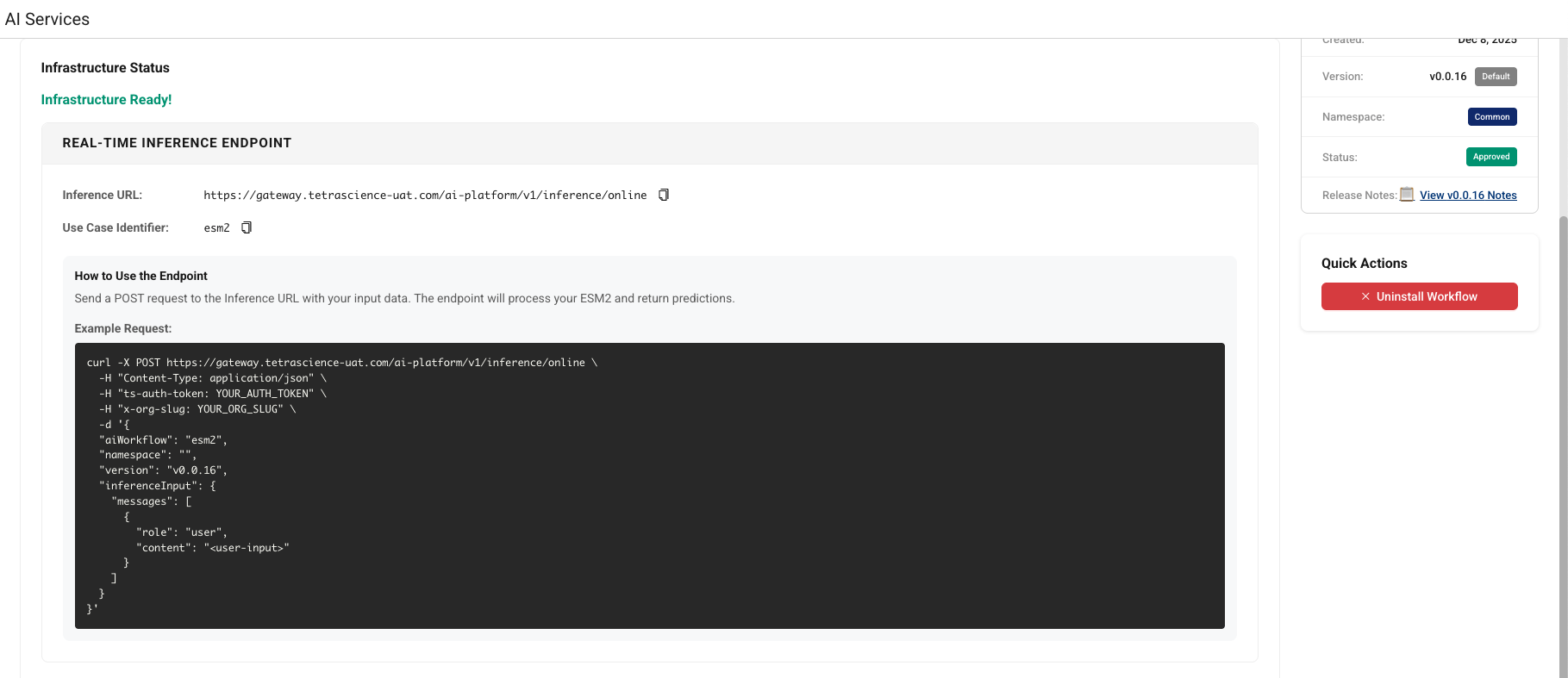

- Copy either the BATCH ENDPOINT or the REAL-TIME ENDPOINT, based on the type of inference you want to run. You can then send a

POSTrequest to the inference URL with your input data, and the endpoint will process it and return a response. For more information about how to run an inference using your installed AI workflow, see Run an Inference.

Run a Scientific AI Workflow Inference

TetraScience AI Services provides the following API endpoints that you can call using your installed AI workflow:

- Submit a real-time (synchronous) inference request(

/inference/online): Invokes the appropriate LLM, AI agent, or custom model to return real-time predictions from text-based inputs. - Submit a batch (asynchronous) inference request(

/inference): Provides inputs through JSON files or specify datasets for batch predictions and asynchronous processing. - Invoke a custom notebook (

/inference/invoke/*): Invokes any notebook in the AI Workflow, including training notebooks, with arbitrary JSON payloads and S3 input files.

For more information, see Run an Inference (AI Services v1.2.x) .

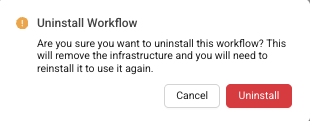

Uninstall a Scientific AI Workflow

To remove the compute resources for an AI workflow without deleting its artifacts or versions, do the following:

- Open the AI Services page as a user with one of the following policy permissions :

- Find the workflow that you want to uninstall.

- Choose the workflow tile's Details button. The AI Workflow's page appears.

- Choose Uninstall Workflow.

The Uninstall Workflow dialog appears and prompts you to confirm that you want to uninstall the workflow. Choose Uninstall.

The Scientific AI Workflow infrastructure is removed without deleting its artifacts or versions. To use the AI workflow again, you must reinstall it.

Monitor Scientific AI Workflow Jobs

To see a complete list off all Scientific AI Workflow installation and uninstallation requests, along with inference requests, see the TDP System Log.

You can also track failed inference jobs through the TDP Health Monitoring Dashboard, by doing the following:

- Sign in to the TDP as a user with one of the required policy permissions .

- In the left navigation menu, choose Health Monitoring. The Health Monitoring page appears with the Dashboard tab selected by default, which displays an end-to-end snapshot of your components' health for your entire TDP ecosystem.

- Select the Jobs tab. Then, for Artifact Type, select AI Workflow. A list of your TDP organization's Scientific AI Workflow job failures appears, including details about each failed job.

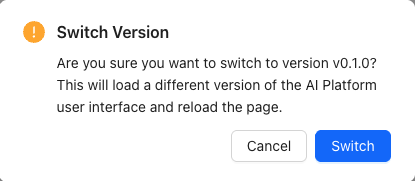

Update the AI Services UI Version

To update the AI Services user interface version in your TDP organization, do the following:

-

Open the AI Services page as a user with one of the following policy permissions :

-

In the Page Version dropdown, select the latest AI Services UI page version. A dialog appears that asks you to confirm that you want to update the AI Services UI to the latest version.

-

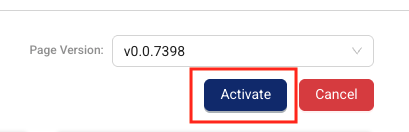

Choose Switch. The latest AI Services UI appears and becomes the default page version for your organization.

-

To activate the selected AI Services UI version for all users in the TDP organization, select Activate. A dialog appears prompting you to confirm by selecting Activate again.

Model Training API

TetraScience AI Services v1.2.0 introduces a Model Training API that allows you to invoke any notebook within an AI Workflow, including training notebooks, directly through the AI Services API. Use the POST /v1/inference/invoke/* endpoint to trigger model training, data prefetching, and other custom notebook tasks. The endpoint accepts arbitrary JSON payloads and S3 input files (using file IDs).

For more information, see Invoke a Custom Notebook in Run an Inference (AI Services v1.2.x).

Knowledge Base and Vector Store

TetraScience AI Services v1.2.x supports the creation and management of vectorized knowledge bases, powered by Databricks Vector Search. This capability enables AI use cases that require retrieval-augmented generation (RAG) and semantic search over enterprise knowledge bases.

You can use the Knowledge Base API to do the following:

- Create and manage vector stores scoped to your organization with role-based access control. When you delete a vector store, AI Services removes both the metadata record and the associated Databricks vector search endpoint.

- Upload and update knowledge base content through the API. When you update a vector store using a PUT request, AI Services re-parses newly added files, re-chunks them using the configured chunking mechanism, inserts them into the delta table, and issues a sync operation for incremental index catchup.

- Query vector stores using natural language text, without needing to manually generate embeddings

- Upload knowledge base files through the AI Asset Files API, with support for multipart uploads for large files

- Monitor vector store health and performance using key metrics piped to Amazon CloudWatch

For more information, see Manage Knowledge Bases in Run an Inference (AI Services v1.2.x).

Vector Store Observability

TetraScience AI Services captures key metrics for your vector stores and pipes them to Amazon CloudWatch. These metrics provide the foundation for performance evaluation and ongoing monitoring of vector store health. You can use CloudWatch dashboards and alarms to track vector store performance over time.

Model Aliases

AI Workflows can use model aliases to reference model versions by lifecycle stage instead of by version number. Supported aliases include dev, staging, canary, champion, prod, and rollback. Updating an alias automatically updates endpoint routing without requiring redeployment, enabling fast and safe model promotions and rollbacks.

Promote Assets Between Environments

NOTE

Model Promotion is available as beta release feature and must be enabled by TetraScience. For more information, contact your customer account leader.

TetraScience AI Services supports promoting AI assets (models and tables) between TDP environments (for example, from a development environment to a production environment) using Databricks Delta Sharing. Every promotion creates an audit record that captures the asset that was promoted, the source and target environments, the timestamp, and the user who authorized the promotion. This provides a complete, immutable chain of custody for compliance reporting.

Prerequisites

Before you can promote a model between environments, the following is required:

- Model Promotion is enabled for your TDP environments by TetraScience.

- The source and target TDP environments are TetraScience-provisioned for you.

- The target catalog and schema in the destination environment already exist in Unity Catalog.

- You have a TDP role that includes at least one of the following policy permissions :

Promote an Asset

To promote a model or table from a source environment to a target environment, do the following:

- Initiate the promotion from the source environment. Send a

POST /v1/promotionsrequest that specifies the source asset (catalog, schema, and asset name) and the target environment. TetraScience AI Services creates an outbound promotion record, sets up the underlying Delta Share, and returns apromotionIdthat you use in subsequent calls.

POST /v1/promotions Request Example

curl -s -X POST "https://<source-gateway>/ai-platform/v1/promotions" \

-H "ts-auth-token: $TOKEN" -H "x-org-slug: $ORG_SLUG" \

-H "Content-Type: application/json" \

-d '{

"sourceCatalog": "dev_catalog",

"sourceSchema": "ml_models",

"sourceAssetName": "molecule-predictor-v2",

"targetEnvironment": "prod-environment-id"

}'

POST /v1/promotions Response Example

{

"promotionId": "promo-550e8400-e29b-41d4-a716-446655440000",

"status": "INITIATED"

}

- Accept the promotion in the target environment. Send a

POST /v1/promotions/{promotionId}/acceptrequest that specifies the destinationtargetCatalog,targetSchema, andtargetAssetName. The target catalog and schema must already exist in Unity Catalog. AI Services mounts the share, deep-clones the asset to the target location, and updates the promotion record.

POST /v1/promotions/{promotionId}/accept Request Example

curl -s -X POST "https://<target-gateway>/ai-platform/v1/promotions/promo-550e8400-e29b-41d4-a716-446655440000/accept" \

-H "ts-auth-token: $TOKEN" -H "x-org-slug: $ORG_SLUG" \

-H "Content-Type: application/json" \

-d '{

"targetCatalog": "prod_catalog",

"targetSchema": "ml_models",

"targetAssetName": "molecule-predictor-v2"

}'

POST /v1/promotions/{promotionId}/accept Response Example

{

"promotionId": "promo-550e8400-e29b-41d4-a716-446655440000",

"status": "IN_PROGRESS",

"targetCatalog": "prod_catalog",

"targetSchema": "ml_models",

"targetAssetName": "molecule-predictor-v2",

"acceptedBy": "[email protected]",

"acceptedAt": "2026-04-29T14:32:10Z"

}

- Monitor promotion status. Send a

GET /v1/promotions/{promotionId}request to check progress. The status moves throughINITIATED→IN_PROGRESS→TRANSFERRED→COMPLETED. If the promotion fails, the status becomesFAILEDand the response includeserrorMessageanderrorCodefields you can use to diagnose the issue.

GET /v1/promotions/{promotionId} Request Example

curl -s "https://<source-gateway>/ai-platform/v1/promotions/promo-550e8400-e29b-41d4-a716-446655440000" \

-H "ts-auth-token: $TOKEN" -H "x-org-slug: $ORG_SLUG"

GET /v1/promotions/{promotionId} Response Example

{

"promotionId": "promo-550e8400-e29b-41d4-a716-446655440000",

"status": "COMPLETED",

"sourceCatalog": "dev_catalog",

"sourceSchema": "ml_models",

"sourceAssetName": "molecule-predictor-v2",

"targetCatalog": "prod_catalog",

"targetSchema": "ml_models",

"targetAssetName": "molecule-predictor-v2",

"targetEnvironment": "prod-environment-id",

"initiatedBy": "[email protected]",

"initiatedAt": "2026-04-29T14:30:00Z",

"completedAt": "2026-04-29T14:35:22Z"

}

When the promotion completes, the promoted asset is available in the target environment's Unity Catalog at the location you specified and can be used to install or run AI Workflows.

Remove a Promoted Asset

To remove a previously promoted asset from the target environment, send a POST /v1/promotions/{promotionId}/unpromote request from the source environment. AI Services drops the cloned asset from the target catalog, cleans up the underlying Delta Share, and records the removal in the promotion's audit history.

POST /v1/promotions/{promotionId}/unpromote Request Example

curl -s -X POST "https://<source-gateway>/ai-platform/v1/promotions/promo-550e8400-e29b-41d4-a716-446655440000/unpromote" \

-H "ts-auth-token: $TOKEN" -H "x-org-slug: $ORG_SLUG"

Review Promotion History

All promotion lifecycle actions (initiate, accept, complete, fail, unpromote) are recorded in the TDP System Log along with the user who authorized each action and the source and target environments. Use the System Log to review the promotion history of any asset for compliance and GxP audit reporting.

Known and Possible Issues

The following are known issues in TetraScience AI Services v1.2.1:

- Vector Store Creation Fails with 403 Permission Denied: When deploying a new vector store, the Databricks creation job may fail with a

403 PERMISSION_DENIEDerror when thecreate_endpoint.pynotebook attempts to create the vector search endpoint. If you encounter this error, contact TetraScience Support. - Vector Store Query Returns 502 Error After Reaching Ready Status: After a vector store successfully reaches

readystatus, querying it may return a502error from Databricks Model Serving. If you encounter this error, contact TetraScience Support.

Limitations

The following are known limitations of Tetra AI Services:

- Task Script README Parsing: The platform uses task script README files to determine input configurations. Parsing might be inconsistent due to varying README file formats.

- AI Agent Accuracy: AI-generated information cannot be guaranteed to be accurate. Agents may hallucinate or provide incorrect information.

- Organization-Specific Components: AI capabilities are limited to publicly available TetraScience components and cannot incorporate organization-specific components.

- Complex Logic Implementation: AI-generated pipelines with complex logic may require manual implementation and refinement.

- Databricks Workspace Mapping: Initially, one TDP organization maps to one Databricks workspace only.

For more information, see the AI Services FAQs .

Documentation Feedback

Do you have questions about our documentation or suggestions for how we can improve it? Start a discussion in TetraConnect Hub. For access, see Access the TetraConnect Hub.

NOTE

Feedback isn't part of the official TetraScience product documentation. TetraScience doesn't warrant or make any guarantees about the feedback provided, including its accuracy, relevance, or reliability. All feedback is subject to the terms set forth in the TetraConnect Hub Community Guidelines.

Updated 2 days ago